From Wrinkles to Robot Folding

Building a Cloth Folding Physical AI Agent with Cyberwave

By Abhishek Pavani & Yash Shukla (Team BostonX)

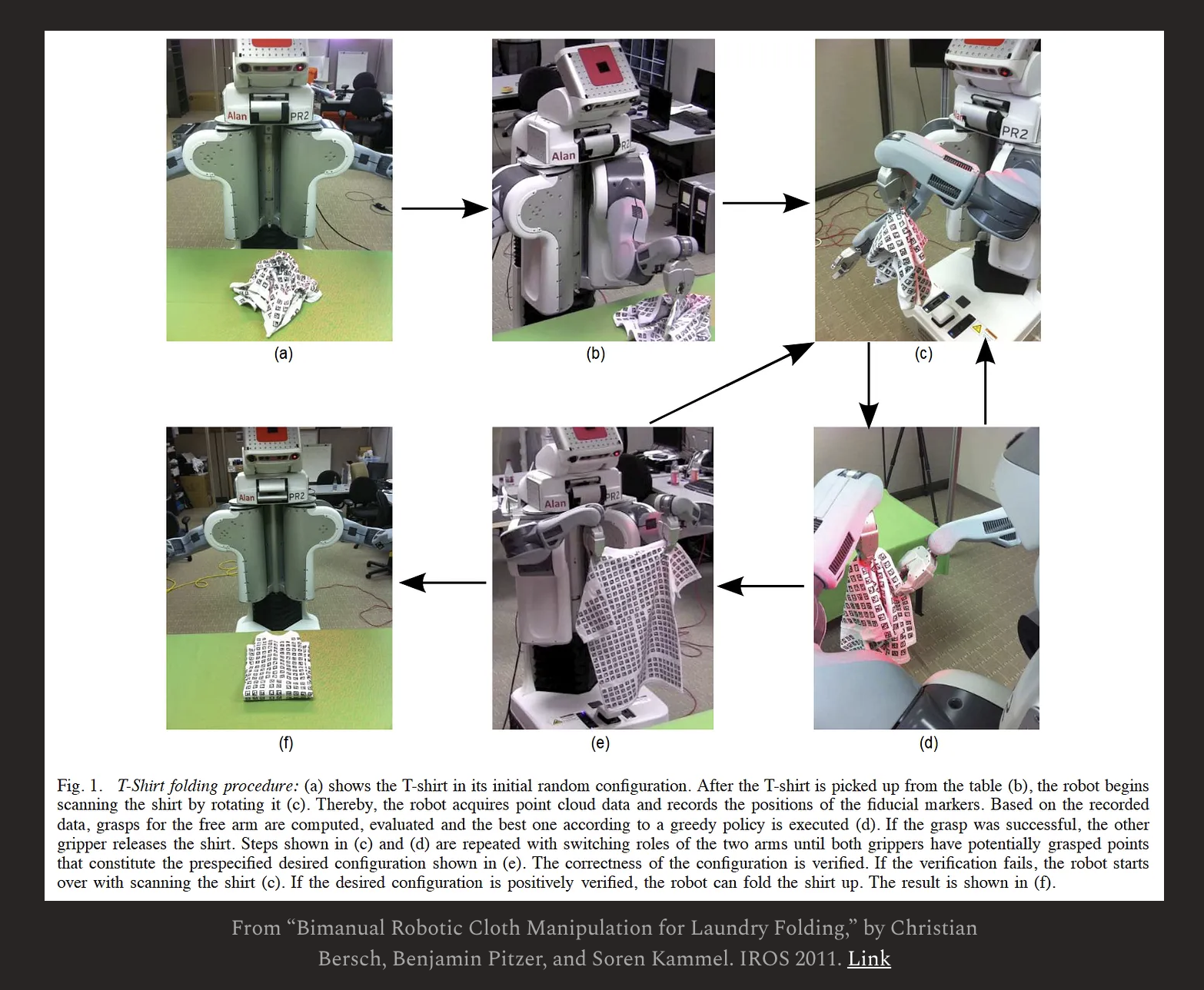

Folding laundry is widely considered the "chore that Americans hate the most". We know countless people who would thank us for building a robot which can do that. For robotics, it represents a "nightmare" because traditional programming cannot predict the chaotic configurations of floppy fabric. Unlike rigid objects, a piece of cloth has infinite states, making point-cloud markers and hard-coded geometry insufficient.

This beautiful substack post by Chris Paxton precisely captures "why everyone in the robotics space is folding laundry."

The "Big Picture"

Most robots are pretty expensive. Our goal was to leverage a low-cost SO-101 arm to determine if complex cloth manipulation was even feasible on a budget. By combining the Cyberwave platform with high-efficiency Vision-Language-Action (VLA) models like SmolVLA, we’ve made an attempt to demonstrate that robots can master this "unfoldable" task with as few as 50 human demonstrations.

This guide provides a thorough, step-by-step walkthrough of the entire pipeline—from unboxing your hardware to watching your robot autonomously fold a napkin.

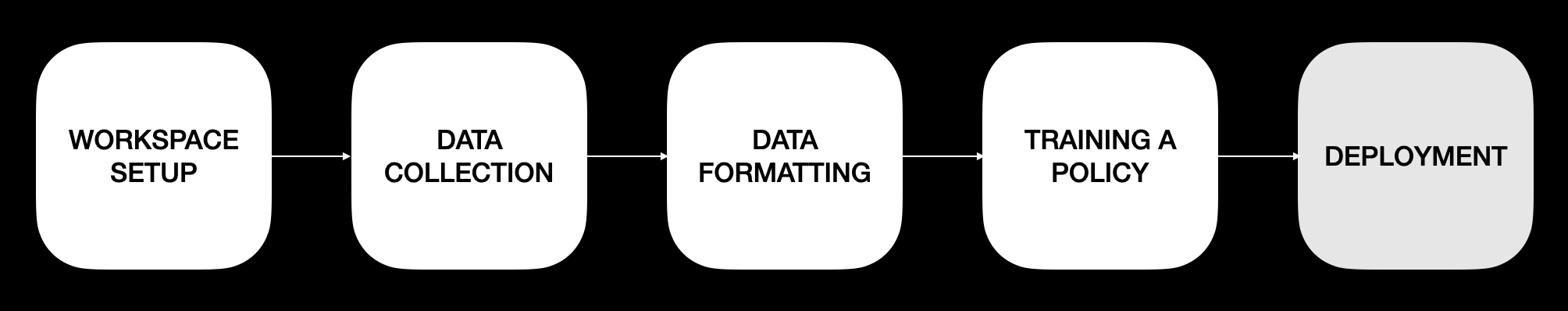

The Cyberwave Approach: A 5-Step Pipeline

To solve this, the Cyberwave platform streamlines the transition from human teleoperation to autonomous robotic action through a structured five-step approach:

- Workspace Setup: Configuring the physical environment.

- Data Collection: Recording human demonstrations.

- Data Formatting: Preparing data for the AI model.

- Training a Policy: Teaching the model to act.

- Deployment: Running the agent autonomously in the real world.

Step 1: Workspace Setup Initial Configuration

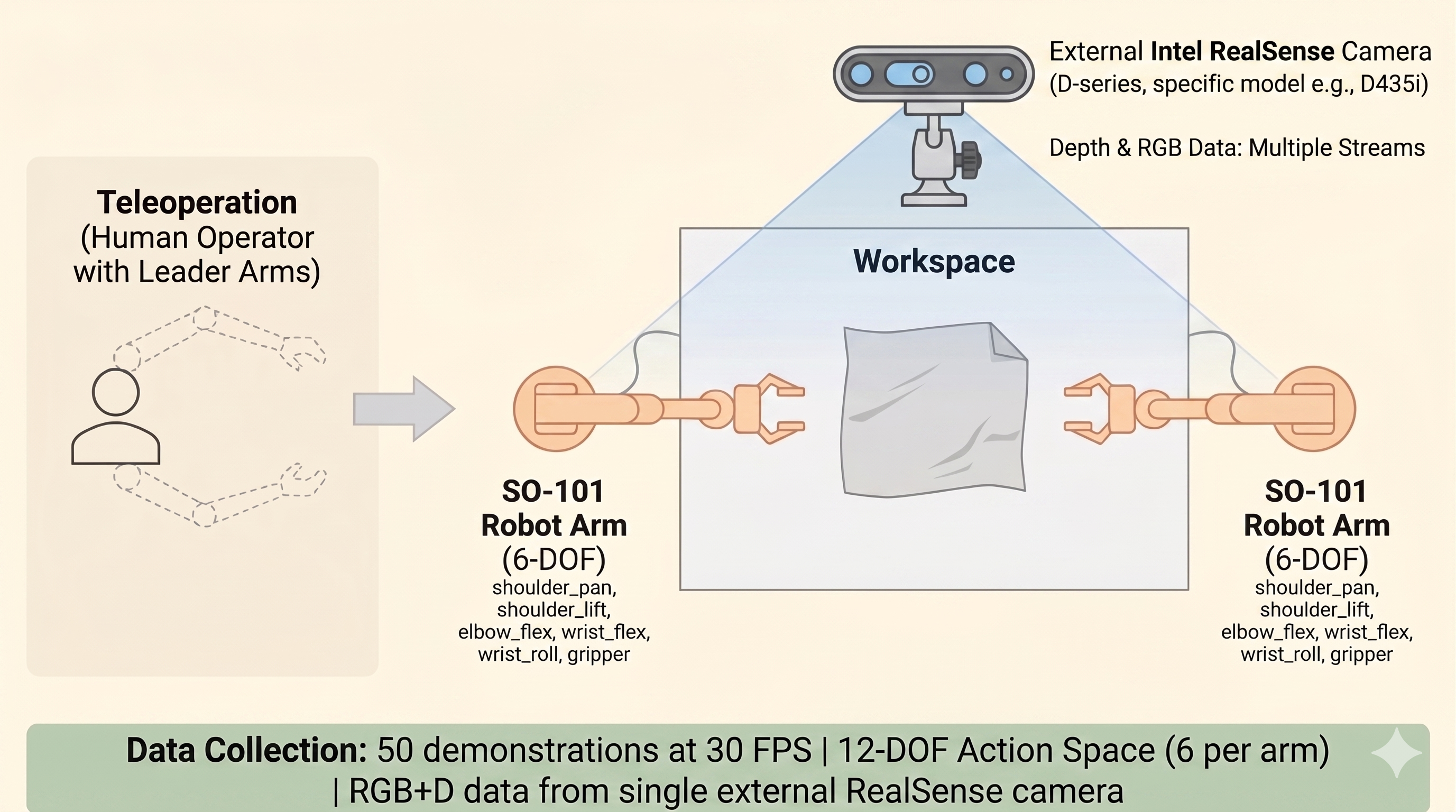

Setup begins with a bimanual (two-armed) hardware configuration. This setup mimics human dexterity, which is essential for handling large pieces of fabric.

- Robot Arms: Two SO-101 6-DOF arms (total 12-DOF action space).

- Vision: An external Intel RealSense Camera (D435i or D455) for RGB and depth streams.

- Joint States: The 12-DOF space includes shoulder pan, shoulder lift, elbow flex, wrist flex, wrist roll, and gripper for each arm.

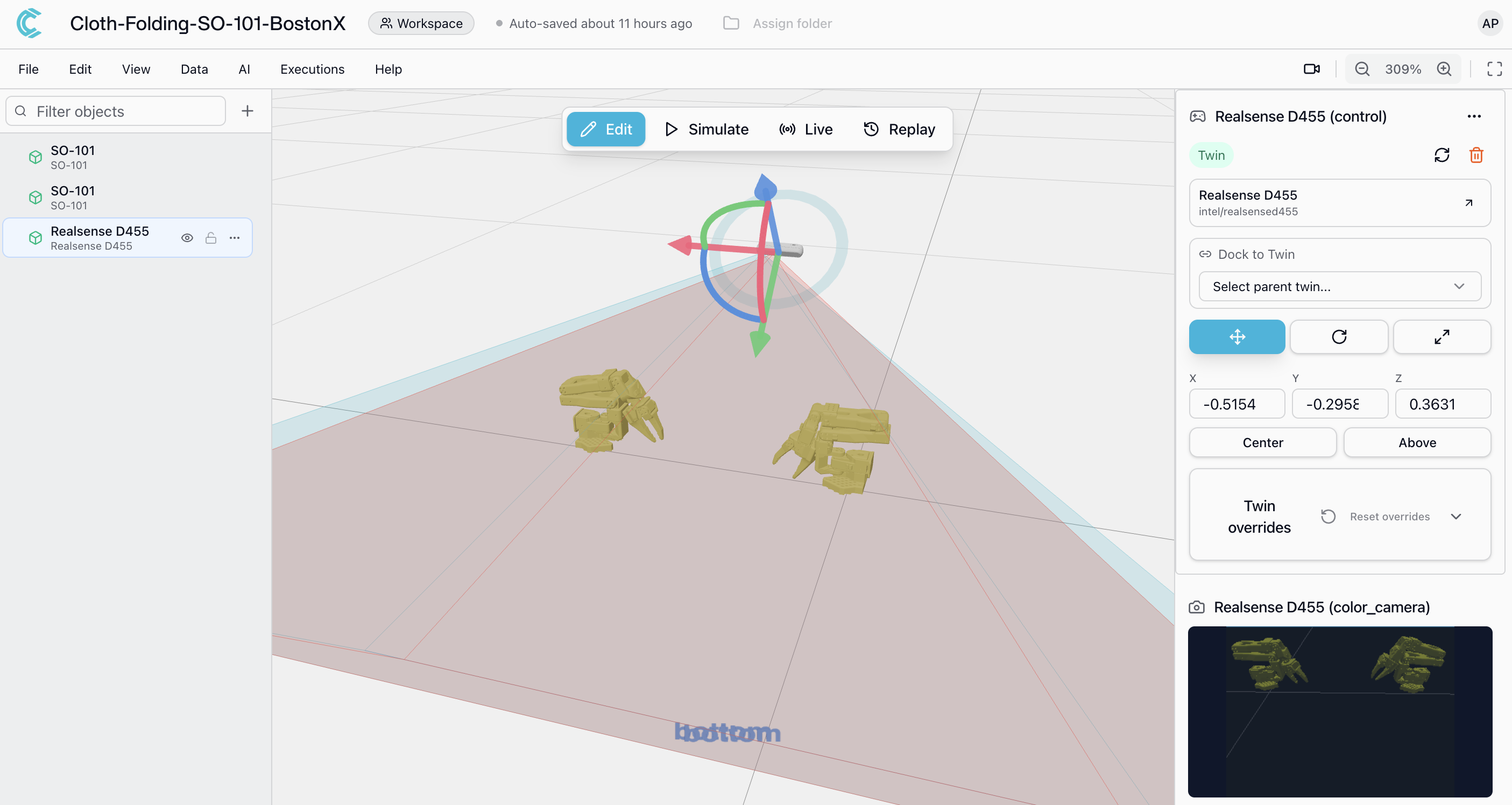

Cyberwave Integration

After setting up the workspace, we used the cyberwave platform to setup a simulation environment on the cyberwave platform and connect the so101 arms to their digital counterpart "twin". Their documentation and github repo do a thorough job of explaning the steps to set this up.

Using the Cyberwave CLI, you can initialize your "Digital Twin" to manage device connections. Once the environment is setup, the digital twin will look something like this

The calibration of the arms and connecting to the digital twin made it really easy to control the arms for teleoperation and thus got us to the point of collecting demonstration data for the project

Step 2: Data Collection Teleoperation

We decided to follow the latest advances in Agentic AI and go the imitation learning route. AI agents learn best through imitation. For a complex task like cloth folding, the goal is to collect 50 demonstrations at 30 FPS.

During recording, Cyberwave handles the high-frequency synchronization of the 12-DOF action space and the multi-stream RGB+D data.

Step 2a: Data Post-Processing lerobot conversion

Once the data has been collected using the platform, we post-process that data into le-robot format which is then used for training. We also built a visualizer to visualize our converted lerobot episdoes.You can monitor the "health" of your data in real-time using the integrated Rerun.io visualizer, which displays joint state graphs alongside live video.

More on this can be found in the readme here

Step 3: Training the Smol-VLA Model The Brain

The core of the autonomous behavior is the Smol-VLA model. This Vision-Language-Action model is compact (approx. 450M parameters), making it efficient enough to run on edge hardware like the NVIDIA Jetson Orin Nano Super.

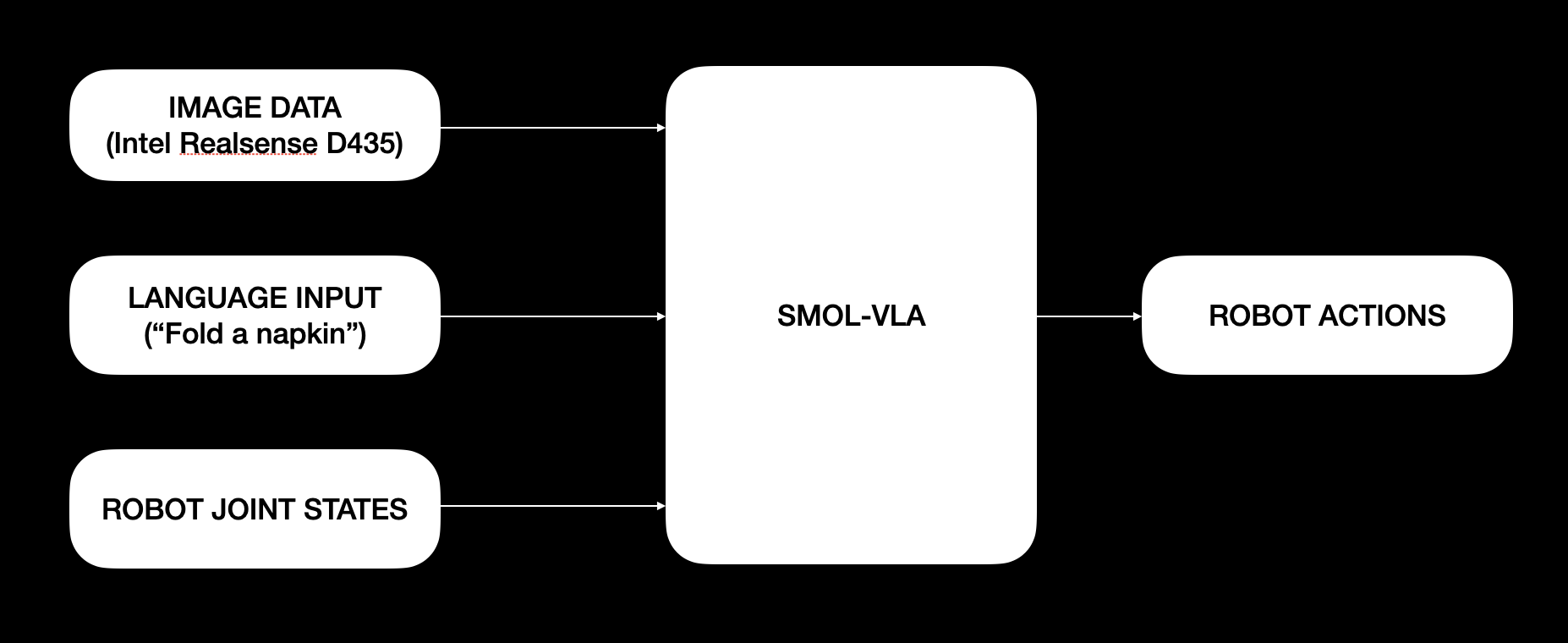

The model functions as a direct mapping: it takes a Language Input (e.g., "Fold a napkin") and Image Data from the RealSense camera to predict the next Robot Actions (joint states).

The docs on the github repo go more in detail about this step

Step 4: Deployment & Success Autonomy

Once trained, the policy is deployed back to the physical arms. A critical technical detail is the use of Asynchronous Inference, which allows the robot to calculate the next set of movements while it is still executing the current ones, ensuring fluid and continuous motion.

The cyberwave platform really helps test the policy in sim before deploying it on to the real robot.

Unfortunately we had one of the motors on the so-101 follower arms get burnt in the process of our development and we do not have video to showcase the autonomous behaviour. But that said, it was a pleasant experience building with the cyberwave platform

Conclusion

By moving from rigid programming to the Cyberwave Physical AI pipeline, we can transform the "robotic nightmare" of laundry into a solvable task. The combination of dual SO-101 arms and the Smol-VLA model represents the future of household robotics.

Bonus!

We did not want to stop at just folding a napkin. So here is a teaser of us working on the next goal. Getting the arms to fold a t-shirt. It's messy right now, but we will get there!